Quick Answer: An AI reputation engine is Layer 4 of an AI-Powered Business Operating System. It monitors job completion signals in the CRM, selects the optimal timing and channel for a review request, delivers a personalized ask to the client, sends a soft reminder if no response is received, and flags any negative sentiment for human review before it becomes a public problem. A properly configured reputation engine generates 40 to 80 additional Google reviews per year from the same client volume - without anyone on the team manually asking for a single one.

Why Reviews Are a Pipeline Asset, Not a Vanity Metric

Most service business owners think of their Google review count as a reputation indicator - something that makes new clients feel more comfortable booking. That framing is accurate but incomplete. It undersells what reviews actually do.

Google reviews are a direct ranking signal in local search. The two dominant factors in Google's local map pack algorithm are relevance and authority. Review count and review velocity - the rate at which new reviews are being added - are among the primary measurable signals of authority. A service business with 180 reviews and a 4.8 rating appears higher in local search results than a competitor with 90 reviews and a 4.9 rating, everything else being equal. The difference in click-through rate between the first and second result in a local map pack is 28 to 40 percent.

This is not a soft brand benefit. It is a measurable traffic delta that translates directly into inbound lead volume. A business climbing from position 3 to position 1 in local search for its primary service keyword - driven by review velocity - typically sees a 35 to 60 percent increase in inbound call volume from organic sources. That increase does not require a single additional advertising dollar.

The second function of reviews is social proof at the point of decision. When a new prospect finds your business through any channel - search, referral, your website - the first thing they check is your Google rating and review content. A business with 12 reviews and a 4.6 rating is treated as a small operation. A business with 180 reviews and a 4.8 rating is treated as the established, trusted provider in the category. Prospects do not consciously think about this - they simply convert at a higher rate, and at a lower resistance to price.

The third function, which most owners miss entirely, is the review content itself as searchable text. Google indexes the text inside reviews. A review that mentions "emergency AC repair," "fast response time," or "fixed our water heater same day" becomes searchable content associated with your business. Enough reviews mentioning a service keyword and your business begins to appear for that query even when it is not in your formal listing description. This is passive SEO that compounds with every review added.

The AI reputation engine automates all three functions: review velocity, social proof accumulation, and keyword-rich content generation. And it does all three without your team manually asking a single client for a review.

The Timing Problem - Why Asking at the Wrong Moment Destroys Your Request Rate

Before the mechanism, the failure mode. The most common reason service businesses have a low review count despite serving hundreds of satisfied clients per year is not that clients are unwilling to leave reviews. It is that they are asked at the wrong moment.

There are three wrong moments that dominate manual review request attempts in service businesses.

The moment of invoice. Asking for a review at the same time as presenting a bill creates cognitive dissonance. The client's brain is in payment mode - focused on the transaction, the cost, the value exchange. A review request in this moment lands as a quid pro quo: "pay me and then say something nice about paying me." Response rates for review requests delivered at the moment of invoice are typically below 4 percent.

The follow-up email three days later. A standard email follow-up three days after a job asking for a review performs slightly better - typically 6 to 9 percent response rates - but suffers from two structural problems. Emails requesting reviews are filtered as low priority by most inboxes. And three days post-service, the emotional peak of the experience has already passed. The client has moved on mentally. The friction to stopping what they are doing and leaving a review is now significant.

The generic blast to the entire client list. Some businesses run quarterly campaigns asking all past clients for reviews simultaneously. These campaigns arrive with no personalization, no context, and no connection to a recent experience - and they generate response rates of 1 to 3 percent while training clients to mentally categorize the business's communications as bulk marketing.

A properly configured AI reputation engine does not operate at any of these moments. It identifies and delivers at the one moment when the request consistently generates 15 to 25 percent response rates: the moment when the job is complete, the client has had time to feel the benefit, and the friction to leaving a review is at its lowest.

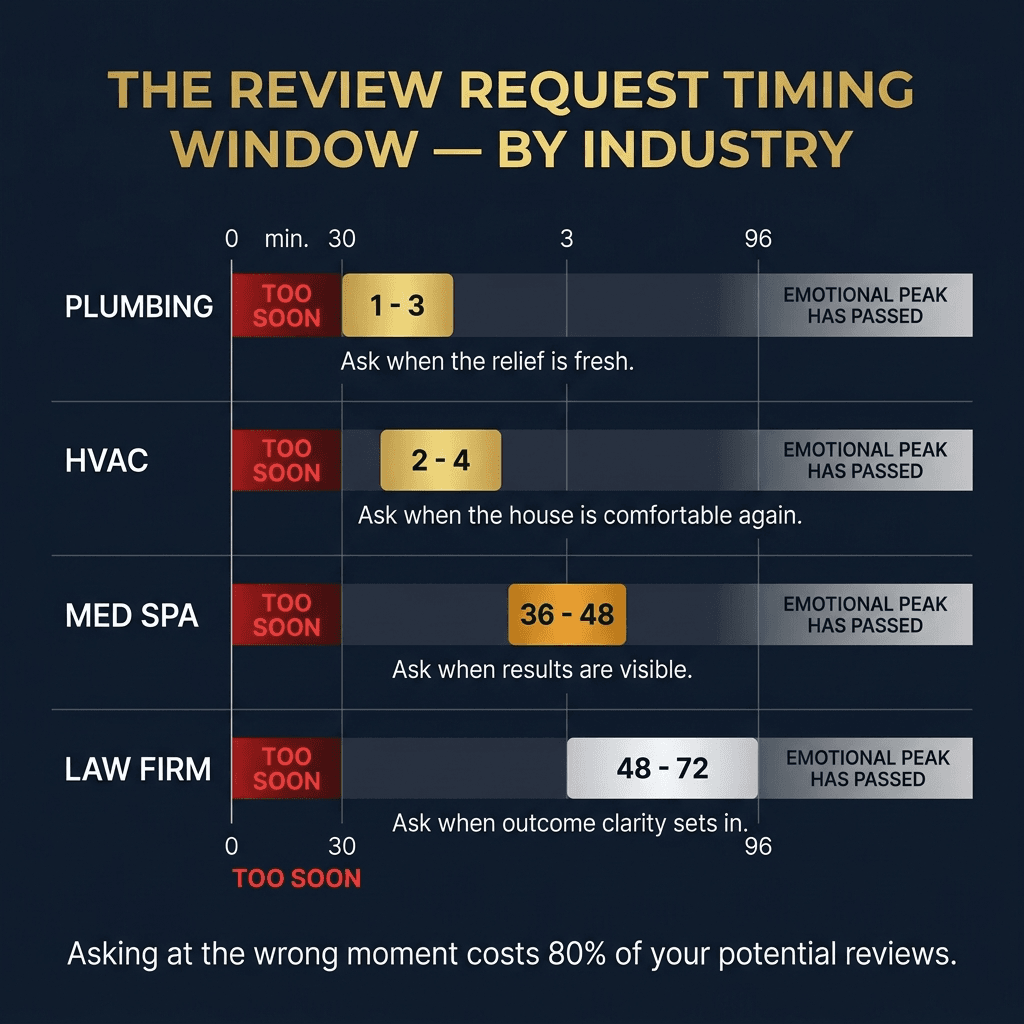

For a plumbing company, that moment is approximately 90 minutes after the technician marks the job complete in the field - when the hot water is running again, the basement is dry, and the relief is still fresh. For a med spa, that moment is 36 to 48 hours after a treatment - when the results are visible, the experience is being processed, and the client has had time to feel positive. For an HVAC company, it is 2 to 4 hours after a system service completion in summer - when the house is cool and the gratitude is immediate.

These timing windows are not arbitrary. They are the empirical result of testing thousands of review request deliveries. The AI reputation engine is configured with the specific timing window for your business type and triggers automatically when the job completion signal fires.

How the AI Reputation Engine Works - The Full Mechanism

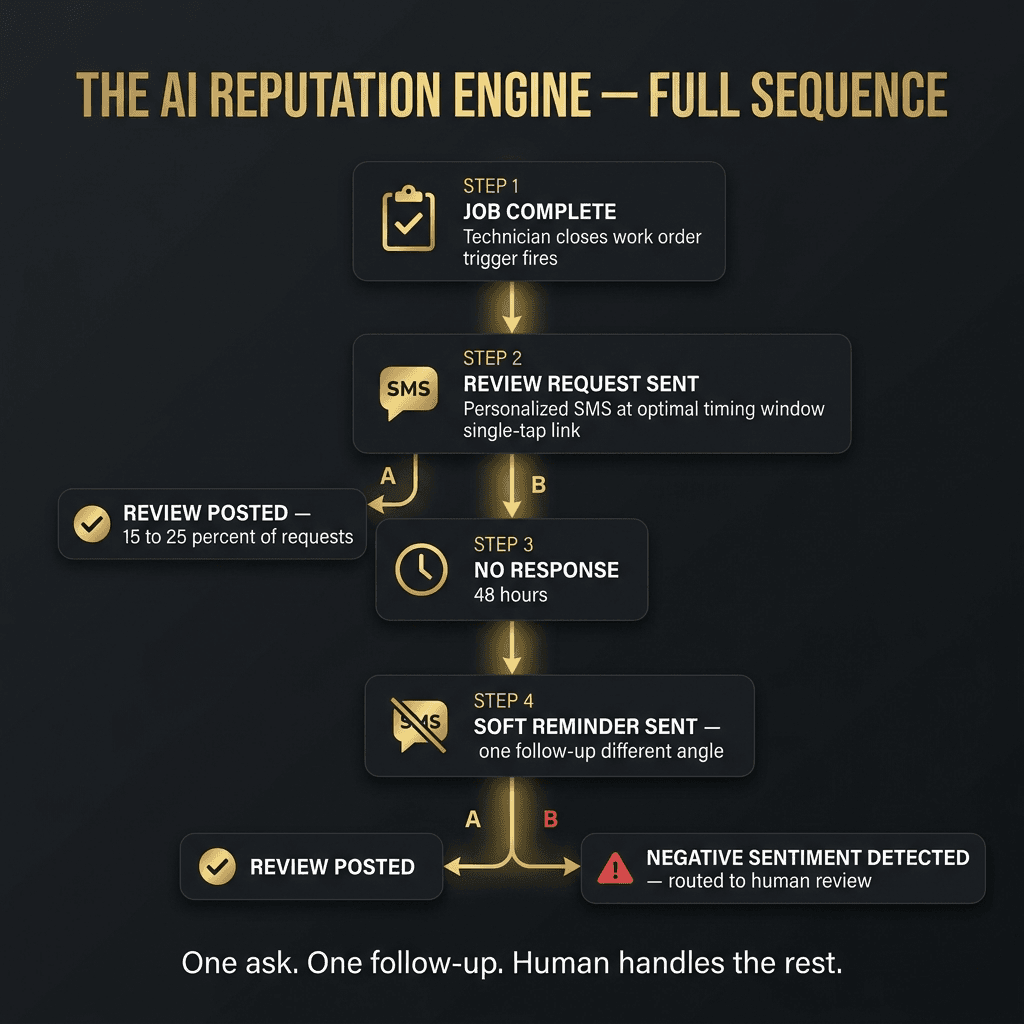

The reputation engine operates on four sequential triggers: the completion signal, the request delivery, the reminder logic, and the sentiment routing.

The completion signal. Layer 4 begins when Layer 1 (intake) or the CRM records a job completion event. For field service businesses, this is typically when the technician closes the work order in the field app. For appointment-driven businesses, it is when the appointment is marked complete in the scheduling system. For service businesses without field software, it can be triggered by invoice issuance or payment completion. The signal source varies - the trigger logic is what matters, and it must be connected to an actual completion event rather than a time-elapsed guess.

The request delivery. Within the configured time window after the completion signal, the engine delivers the review request via the highest-probability channel for the client and industry. For most trades and home services, this is SMS - the primary channel for clients in these categories and the channel with the highest response rate for review requests. The SMS contains three elements: a brief acknowledgment of the completed job (personalizing the ask), a direct statement of what is being requested ("a quick Google review"), and a single-tap link that opens directly to the review submission form - not to the business profile page, not to Google Maps, but directly to the review box.

The copy matters substantially. A message that says "Hi [Name], it was great working with you today on your AC service - if you have 60 seconds, a Google review helps families like yours find us: [link]" consistently outperforms a message that says "Please leave us a review: [link]" by a factor of 3 to 5 in response rate. The personalization elements are injected automatically from the CRM record.

The reminder logic. If the client does not respond within 48 hours of the initial request, the engine sends one soft follow-up. The follow-up acknowledges that the client may have missed the first message and restates the request with a slightly different framing - often leading with the local impact angle ("Your review helps your neighbors find reliable service in [City]") rather than repeating the same copy. The follow-up is sent only once. No third attempt is made. The total sequence is: one primary request, one soft follow-up, and then the contact is marked as reviewed or non-responding and removed from the review queue.

Sentiment routing. When a client responds to the review request by replying to the SMS rather than clicking the link - often because they have a concern, a complaint, or a question - the engine intercepts the response and routes it to the appropriate human team member before any public review is posted. This is not review gating, which violates Google's Terms of Service. It is human-in-the-loop routing for negative sentiment: the response is captured, the team member is alerted, and the business has the opportunity to resolve the issue before the client's experience solidifies into a public one-star review.

The distinction matters. Review gating - asking clients to rate their experience before directing them to Google and only sending satisfied clients to the public review page - is an explicit violation of Google's policies and has resulted in penalties for businesses caught doing it. The sentiment routing in a properly configured reputation engine does not prevent any client from leaving a public review. It simply ensures that clients who respond with a concern are connected with a human before they default to the public channel.

The 12-Month Compound Effect - What 60 Additional Reviews Actually Produces

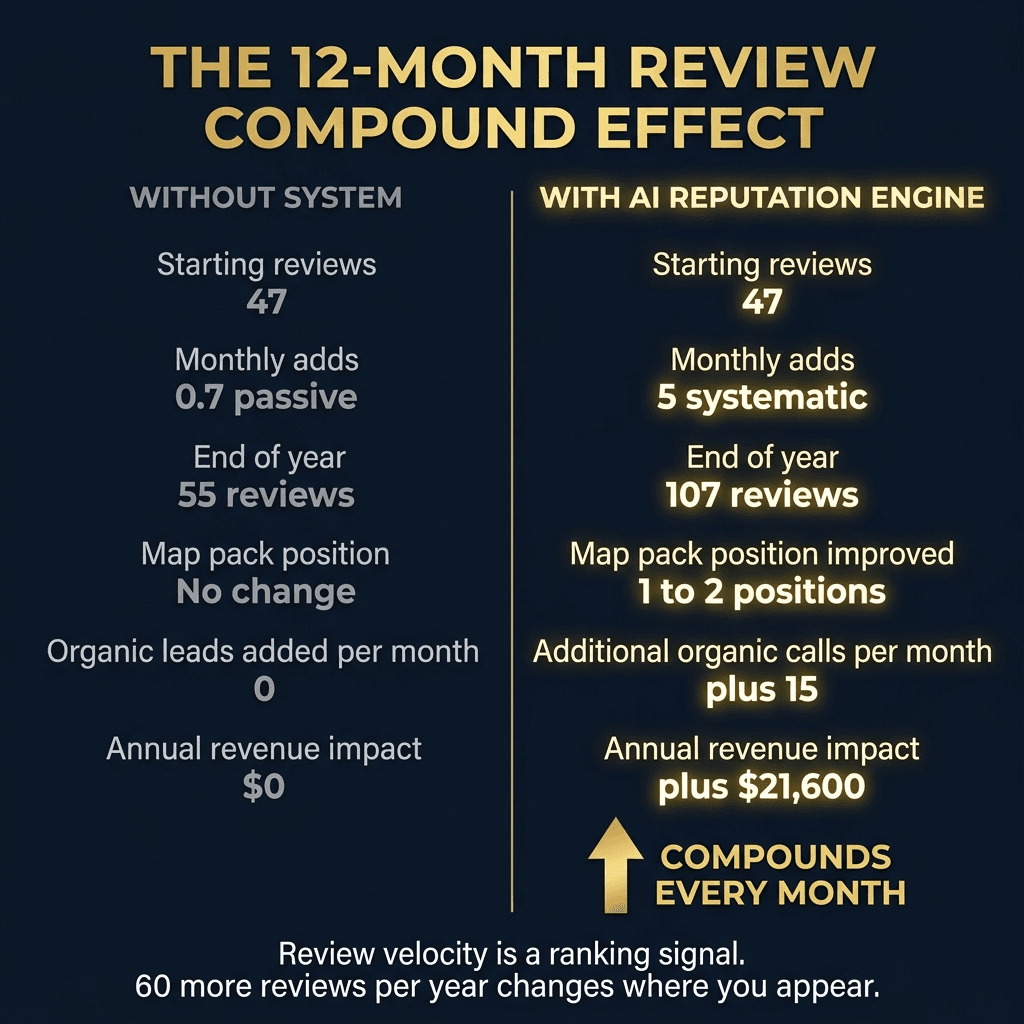

The clearest way to illustrate the value of Layer 4 is to model what happens to a service business that adds 60 reviews over 12 months versus one that adds 8 reviews over the same period - which is roughly what most service businesses accumulate through passive, manual review requests.

A business starting at 47 reviews and adding 60 over 12 months (5 per month) ends the year at 107 reviews. At that volume, assuming a rating above 4.6, the business will typically advance at least one position in the local map pack for its primary service keyword. In a competitive local market, that advancement translates to a traffic increase of 25 to 40 percent from organic local search alone. At a 20 percent conversion rate on organic search traffic and an average ticket of $600, a business generating 15 more calls per month from better map pack position earns $1,800 per month in additional revenue - $21,600 per year - from the review velocity alone.

At 12 months, the compound effect continues. The business now has 107 reviews. The competitor who did not implement Layer 4 has 55. The gap makes the choice visually obvious to any new prospect doing a comparison. The higher review count signals not just authority to Google but safety to prospects - the more people who have publicly attested to the quality of the experience, the less risk a new client perceives in choosing the business. This is a psychological dynamic that does not diminish as review count grows. It compounds.

The review content compound is also real. At 107 reviews, the text of those reviews will mention dozens of specific service types, technician names, locations, and experience descriptors. Each mention is indexed by Google and associated with the business. The business gains searchable coverage for long-tail queries it never explicitly targeted.

A business that starts the reputation engine today will not see the full compound effect for 6 to 8 months. But the compound effect exists regardless of when it starts - and every month of delay is a month of the curve that cannot be recovered.

What a Properly Configured Reputation Engine Looks Like

The technology that powers AI reputation engines is increasingly commoditized. The configuration is where the value is created or lost. Five configuration elements determine whether a reputation engine performs at the top of the range or the bottom.

Timing calibration by industry. The completion-to-request timing window must be set for your specific service type. A one-size-fits-all two-hour trigger works for some industries and fails for others. A med spa using a two-hour trigger is requesting a review before the client can see their Botox results. A plumbing company using a 48-hour trigger is requesting a review after the client has fully moved on from the experience and the emotional peak has passed. The timing calibration is the highest-leverage configuration decision in the entire engine.

Personalization depth. The review request must reference the specific job or appointment. "Your recent HVAC tune-up" is personalized. "Your recent service" is not. The CRM must have a field for service type that is consistently populated at job creation, and the reputation engine must pull that field into the message template. Without this, the request sounds generic, and generic requests generate generic response rates.

Channel selection by client segment. SMS is the default channel for trades, home services, and medical aesthetics. Email is the appropriate default for financial services, legal practices, and professional services where SMS feels presumptuous. The channel should be configurable by client type or service category, not set globally for all contacts.

Exit conditions. A client who has already left a review should never receive a review request. The engine must monitor for reviews posted by clients in the database and suppress the request sequence for any client who has already responded. This requires integration between the review monitoring system and the CRM - a detail that most commodity reputation tools skip.

Negative review response protocol. A public negative review that goes unaddressed for more than 24 hours does more damage than the negative review itself. The engine should alert the designated team member immediately when a new review below 3 stars is detected, include a copy of the review text, and optionally include a suggested response that the team member can approve and post within the 24-hour window. Fast, professional responses to negative reviews outperform the review itself in the eyes of most prospects - a business that responds thoughtfully to a 2-star review demonstrates the kind of customer service that makes new clients more confident, not less.

The One-Number Test for Your Current Layer 4

Before implementing a reputation engine, run one calculation on your current operation.

Take the number of jobs your business completed in the last 12 months. Multiply it by 0.12 - a conservative review generation rate of 12 percent for a properly configured engine. That is the number of reviews you should have accumulated this year. Now compare it to the number of reviews you actually received.

The gap is your Layer 4 revenue leak. At an average ticket of $600 and a 35 percent conversion lift from advancing one map pack position, the revenue value of closing that gap is calculable and specific to your operation.

Most service businesses that run this calculation discover that their current passive review accumulation is running at 1 to 3 percent of completed jobs - meaning 97 to 99 percent of satisfied clients are leaving no public record of their experience. The AI reputation engine converts that passive percentage into an active one.

The clients who would leave reviews are not resistant. They are simply never asked at the right moment, on the right channel, with the right message. Layer 4 fixes all three simultaneously.

The Front Door Diagnostic includes a review velocity benchmark that compares your current annual review accumulation rate to your job volume and tells you exactly how far below potential your reputation engine is running - and what a 12-month acceleration looks like in map pack position and inbound lead volume.

Frequently Asked Questions

What is an AI reputation engine for service businesses?

An AI reputation engine is the review automation layer of an AI Business Operating System. It sends a personalized review request to every customer at the optimal moment after every completed job or appointment - automatically. It monitors incoming reviews, alerts the owner to negative reviews for immediate response, and tracks review velocity (how many new reviews per week) as a core business metric. It is not a review gating tool - it sends every customer through the same process regardless of predicted sentiment.

When is the best time to send a Google review request?

The optimal timing depends on the industry. For trades (HVAC, plumbing, roofing), the best window is 2 to 4 hours after job completion - when the relief and satisfaction of a solved problem is highest. For healthcare (dental, chiropractic, PT), 24 hours after the appointment works best - enough time to reflect on the experience. For project-based businesses (general contractors, painters), 24 to 48 hours after project completion - when the client is still enjoying the result. Timing matters: review requests sent at the right moment generate 3 to 5 times the response rate of requests sent days later.

What response rate should a service business expect from review requests?

Well-timed, personalized review requests sent via SMS typically achieve 8 to 20 percent response rates depending on the industry and how personal the message is. Generic email review requests sent days after service average 1 to 3 percent. The difference is timing, channel (SMS outperforms email 3 to 4x for review requests), and personalization (using the client's name and referencing their specific service or outcome).

How many Google reviews does a service business need to rank in the top 3 on Google Maps?

It depends on the market and category. In a mid-size market (city population 50,000 to 500,000), most service business categories require 80 to 200 reviews with a 4.7 or above rating to compete for top-3 positions. In large markets (500,000+), the requirement is typically 200 to 500+. The key metric is not just total count but review velocity - Google's algorithm favors businesses that are consistently accumulating new reviews over those with a large static count from years ago.

Can AI help respond to negative Google reviews?

Yes. The AI reputation system alerts the business owner within minutes of any review below 4 stars. It can also draft a suggested response - empathetic, professional, and designed to demonstrate to other readers that the business takes feedback seriously. Responding to negative reviews within 24 hours reduces their long-term ranking impact and demonstrates the kind of accountability that actually builds trust with prospective customers reading the reviews.

How long does it take for Google reviews to improve local search ranking?

Review velocity improvements typically begin showing map pack movement within 3 to 6 months. The compounding effect accelerates over time: a business generating 15 new reviews per month for 12 months accumulates 180 reviews - enough to dominate most local markets in their category. Businesses that sustain review generation for 18 to 24 months typically create a competitive moat that is nearly impossible for slower-moving competitors to close.

Vikram Roy is the Founder of The Quiet Protocol, a Toronto-based AI systems firm serving service businesses across the Greater Toronto Area, Canada, and the United States. He works directly with home service companies, dental practices, clinics, and local businesses to install AI operating systems that capture more leads, reduce no-shows, and grow revenue. All content is written from Toronto, Ontario. Connect on LinkedIn →

See the system page tied most closely to the problem this article is diagnosing.

IndustriesOpen the industry path where this revenue leak is framed in operational terms.

Run the Rage CalculatorQuantify the leak before you decide what type of system needs to be installed.

Call the AI Receptionist DemoHear the receptionist live, give it your business context, and test a short caller roleplay before you book.

Results & ProofReview what the system changes once the front door is rebuilt around response and continuity.

What Is an AI-Powered Business Operating System? (A Plain-English Guide for Service Business Owners)

Every SaaS vendor is calling their product an "AI Business OS." Here is what the term actually means, what a real one includes, and why the distinction matters if you are running a trades, medical, or professional services company.

The 5 Layers of an AI Business Operating System (And Which One Is Killing Your Revenue Right Now)

Most service businesses have automated layer 1 and left layers 2 through 5 completely broken. Here is what each layer does, what breaking it costs, and how to tell which one is your biggest gap.

AI Business OS vs. CRM - They Are Not the Same Thing (And Confusing Them Is Costing You)

Most service businesses own a CRM and think they have an operating system. They do not. Here is the exact difference between the two, why it matters, and what you are missing by treating them as equivalent.

Calculate Your Revenue Leak.

Stop guessing. See the revenue your firm is bleeding through its front door and where the operational drag is coming from, then decide whether AI Business Automation is the right system path.

Run the CalculationPrefer to hear it first?

Call the AI receptionist demo and test the conversation live.

Call the AI receptionist demo anytime. Tell it about industries, then hear a short live roleplay based on the calls your front desk actually gets.